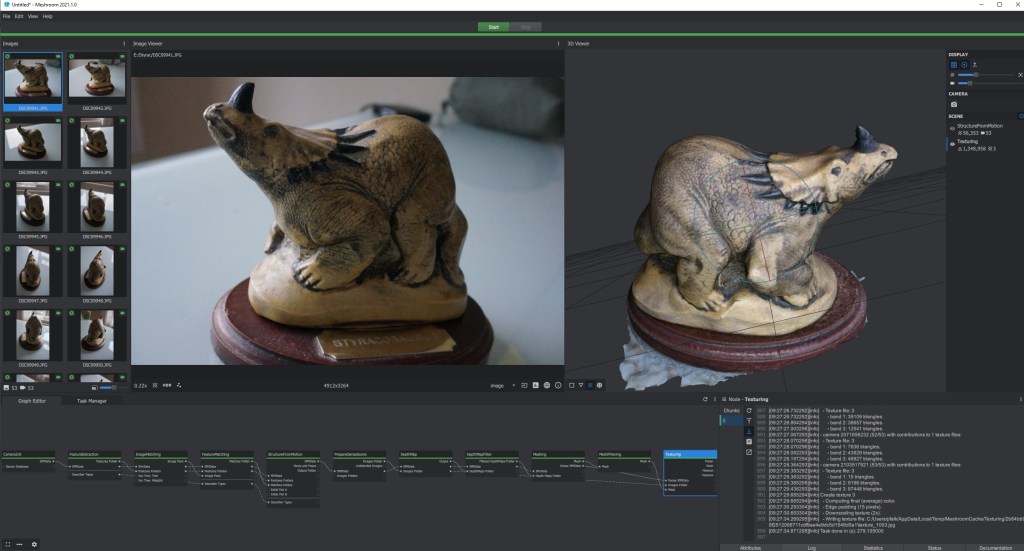

Last week the binaries for AliceVision Meshroom 2021.1.0 were released. I’ll take a look at what’s changed since the last time we took a look at Meshroom.

Meshroom 2020 and texturing.

The last time I posted specifically about Meshroom, it was version 2019.2. I didn’t make a post about 2020.1.0 mainly because texturing broke. Previously (in versions after 2019.2) if you set the texturing node to LSCM or ABF, to produce a single texture file, the process would fail. I believe this is down to Alicevision updating the version of geogram they use. Chris Dean released a workaround on github, where you could download the old texturing node and incorporate it into later versions of Meshroom.

I kept waiting for an update that fixed it, and one never came. Are they fixed now? Well, I can get them to work, but not like it used to. It seems that the triangle limits are much harder now – high-poly meshes just won’t texture with LSCM or ABF methods. Which is a shame, because dealing with multiple texture files produced by the basic unwrap is a massive pain in the backside.

Meshroom 2021.1.0 – What’s new?

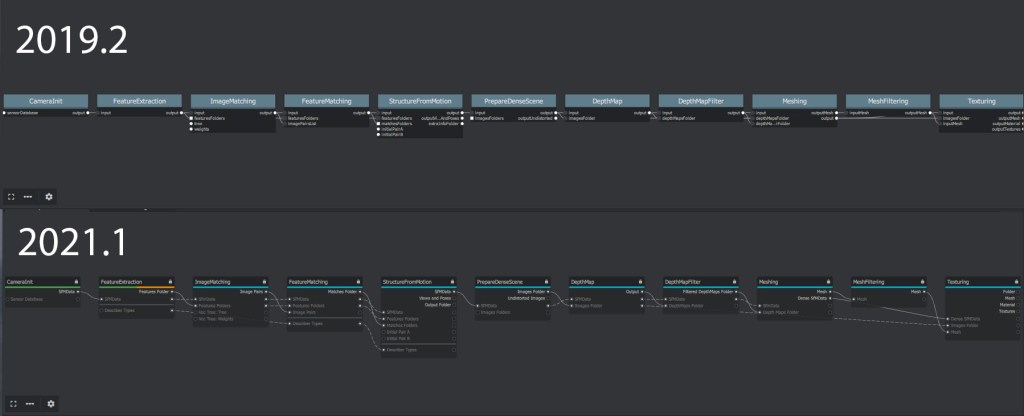

Most obviously (to me) is that the graph editor has been updated. It’s a little bit more technical-looking now, being all grey, but not a huge difference. I like it. But then I often like little visual changes to programs and operating systems, as it keeps them fresh. The new headings of each node aren’t as clear, but the individual lock icons are part of an update to how the locking works, and you can now add to the pipeline while it’s processing.

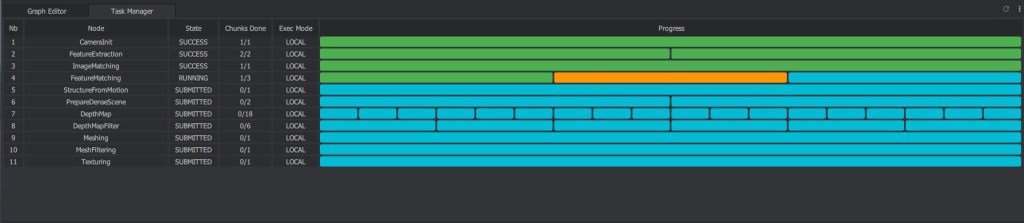

There’s also a cool new task manager tab. I’m not sure this is super useful, but it’s kind of fun to see:

And on the right, the attribute editor and log are joined with a documentation tab (yes!), and a status tab that shows processor and GPU usage for each node. You can easily use the task manager in combination with this to click any part of the process and get info.

Aside from the UI changes, there’s PanoramaCompositiing node, for dealing with panoramas – not something I can imagine using myself.

Sift feature extraction has been improved apparently, and made more robust (see below).

Meshing has been improved, with new post-processing, and a new ability to smooth and filter a subset of the geometry.

There’s also some changes to the gain/gamma controls in the image Viewer. Again, not something I’d notice in day-to-day use.

Actually, there were more significant changes in Meshroom 2020, which I didn’t write about. Those improvements included lots of nodes to do with HDR, and something I can see myself using: the ability to define a bounding box in the 3D view for meshing a portion of the sparse point cloud.

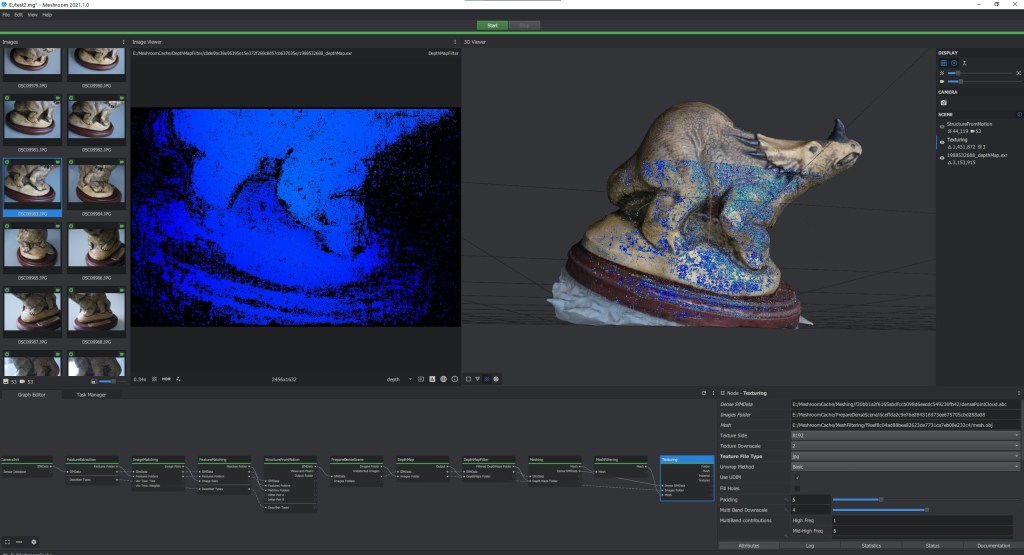

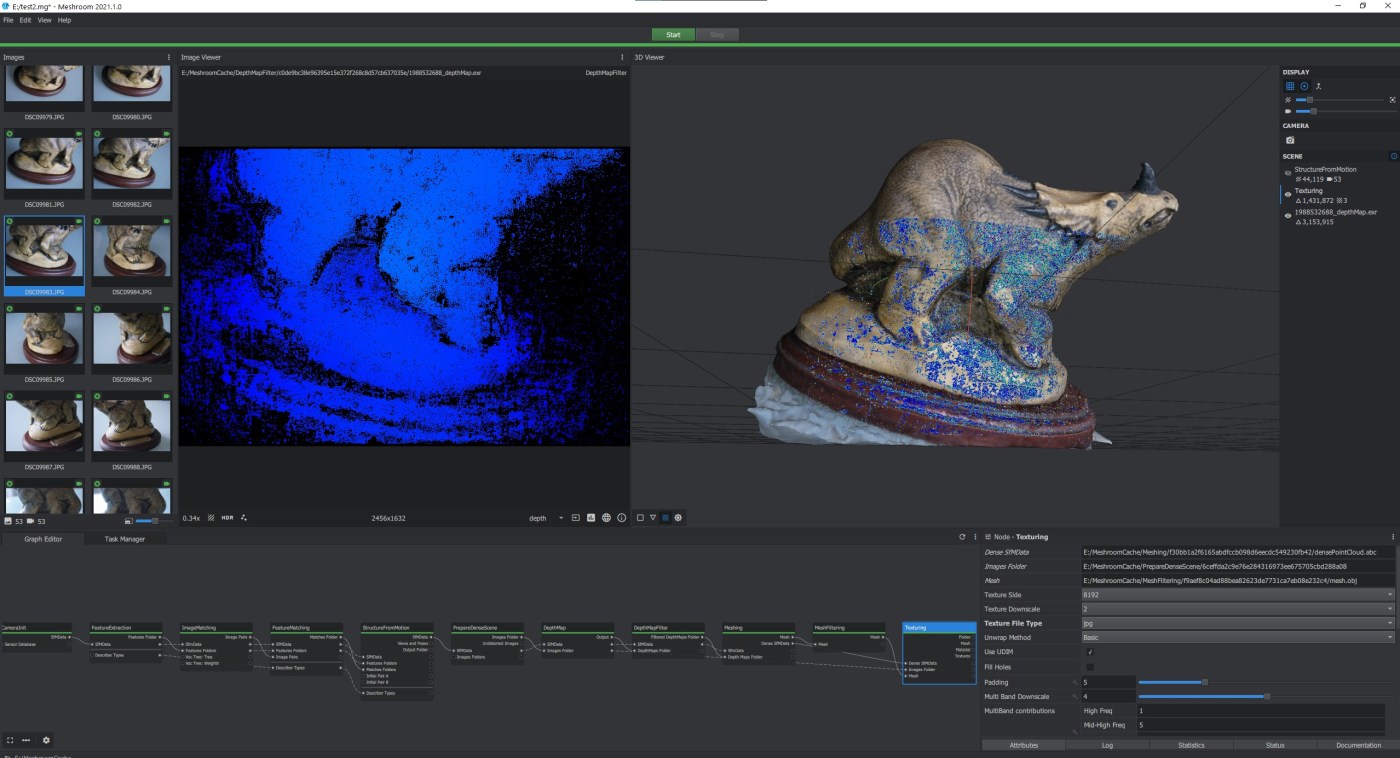

And we can now view depth maps directly in both the image viewer and 3D view:

Meshroom 2021 – improvements in speed/reconstruction?

I ran my standard dataset through Meshroom 2021, and also through 2019.2 (the last version I relied on). For this comparison, I used default settings in both.

| Node | Meshroom 2019.2 | Meshroom 2021.1 |

| CameraInit | 00:00:01 | 0:00:00.2 |

| Feature Extraction | 00:01:24 | 00:19:17 |

| ImageMatching | 00:00:00 | 00:00:03 |

| FeatureMatching | 00:01:18 | 00:04:39 |

| StructureFromMotion | 00:00:57 | 00:01:44 |

| PrepareDenseScene | 00:01:52 | 00:00:50 |

| DepthMap | 00:18:28 | 00:20:07 |

| DepthMapFilter | 00:07:04 | 00:07:13 |

| Meshing | 00:05:29 | 00:06:33 |

| MeshFiltering | 00:00:11 | 00:00:15 |

| Texturing | 00:02:16 | 00:05:11 |

| TOTAL | 00:39:01 | 01:05:52 |

So, straight away you can see that with default settings, Meshroom 2021.1.0 is taking significantly longer than the older version. The main source of that difference is the Feature extraction node, which went from a minute and a half in v2019.2, to nearly 20 minutes in 2020.1. However, 2021.1 did reconstruct all 53 images, while v2019.2 only matched 51. Relatively few of the programs I test can match all of the images in this -less than optimal- dataset.

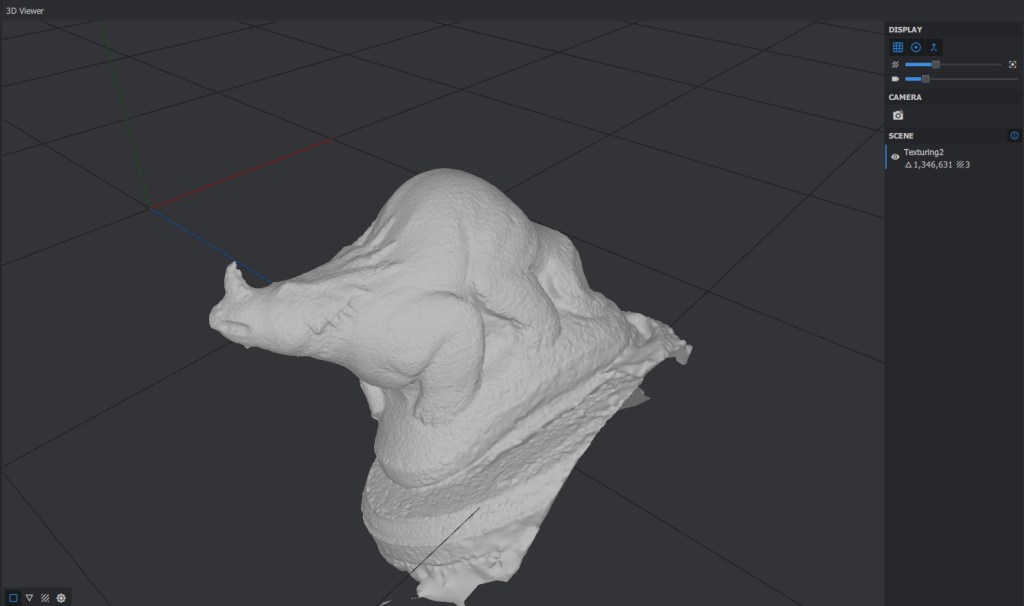

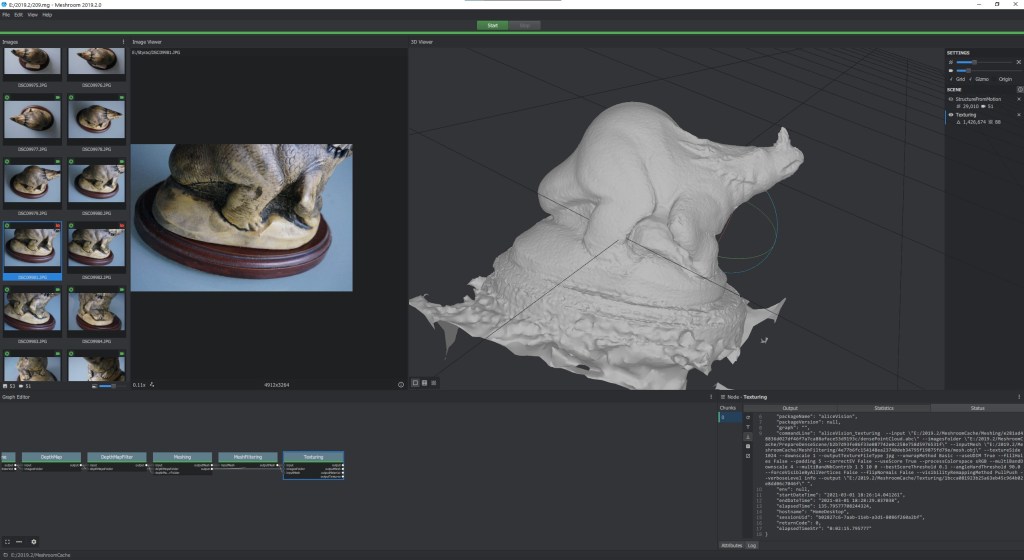

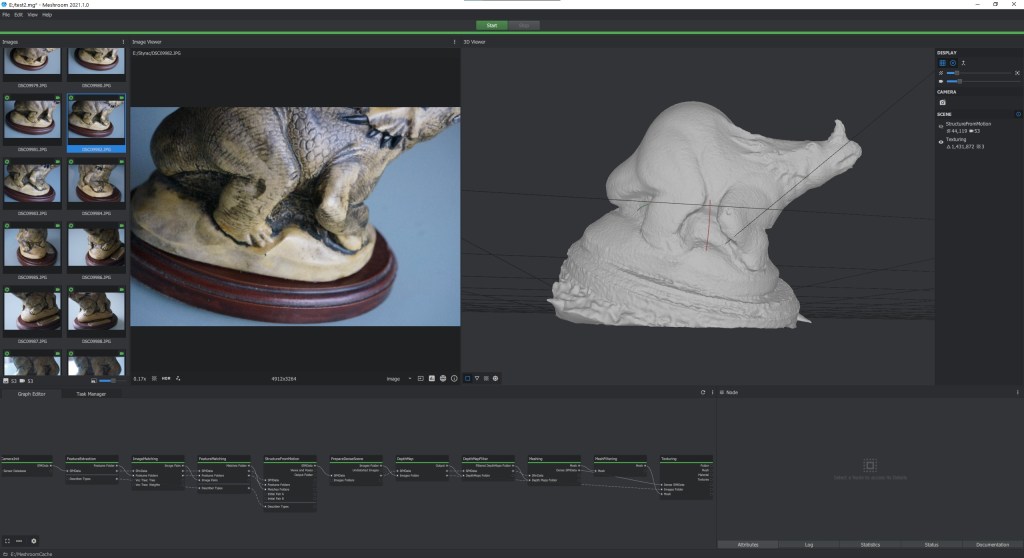

The resultant models weren’t that different though:

The base is cleaner/cropped more in 2021, but otherwise it’s much of a muchness. Both versions produced final meshes of ~1.4 million triangles. I will note that the basic texturing in 2021.1 produced 3 texture files, while basic texturing in 2019.2 produced a whopping 88 texture files.

Texturing with LSCM and ABF

It seems that LSCM and ABF unwrapping methods do now work, as long as the mesh has a low enough polygon number. The descriptions for each method have always said that LSCF is good for meshes with <600,000 faces, and ABF for <300,000 faces. It’s not rigidly enforced, but it seems I have to use the mesh decimate node to get the mesh into those ballparks to use anything other than Basic unwrapping. That’s unfortunate, because I really hate dealing with more than one texture file, but I don’t want to have to downsample my meshes to get that.

Speeding up Meshroom 2021.1.0 by tweaking parameters

I have previously written a post about what parameters I used in 2019.2 and earlier. That post is now very out of date.

Here’s some things that, in some cases, drastically improve Meshroom 2021 performance:

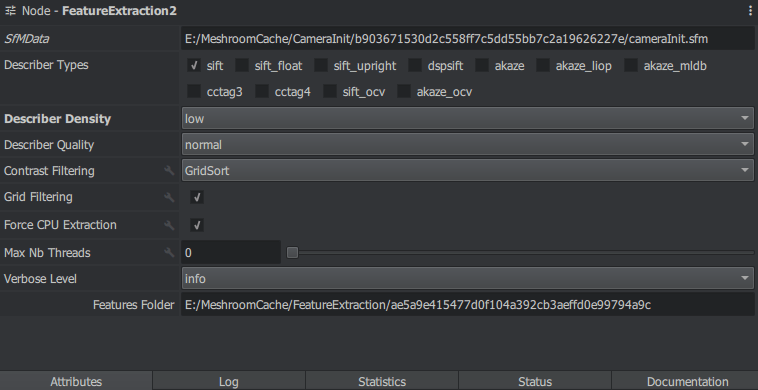

FeatureExtraction: Set the feature density to low.

Seriously – one of the biggest time sinks in the default settings, as shown in the table above, is Feature Extraction. I went to that node and set describer density to ‘low’:

That dropped the total time for Feature Extraction from 19 minutes, to 2 minutes. And it still matched all 53 cameras. The sparse point cloud had about half as many points though.

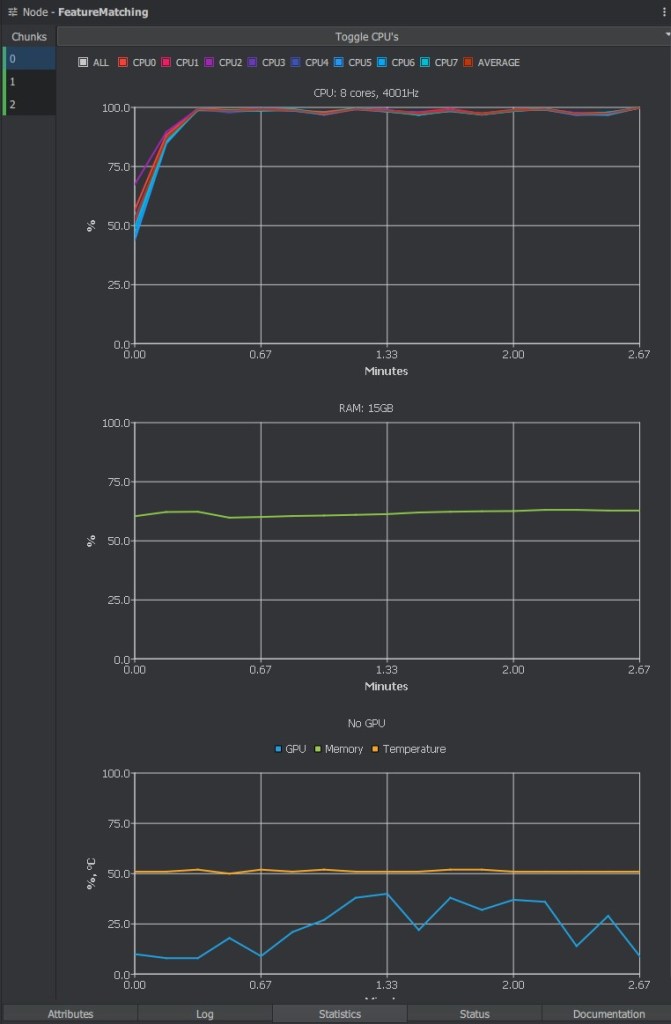

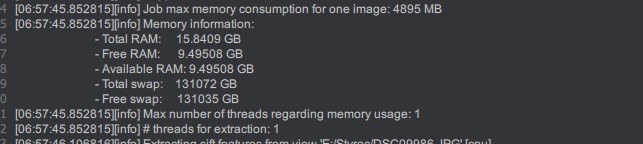

FeatureExtraction: Use the GPU

On the FeatureExtraction node there’s a check box for “Force CPU Extraction”. This is checked by default because on larger datasets (>200 images), the feature matching uses a vocab tree that hasn’t been trained on GPU features. However, unticking that box, and leaving the Describer Density on Normal reduced the time taken from 19 minutes to 24 seconds., and 53 cameras still matched That’s a significant speed up. It could just be because my CPU is now 5-6 years old (though it’s still 8-core 4GHz), but it feels like a bug to me (especially as the CPU has access to 16GB of RAM, but my GPU only has 8GB)

FeatureExtraction: Change the Contrast Filtering Method to anything but GridSort.

Actually, I found the issue with it running on the CPU:

It seems that due to memory issues, the FeatureExtraction node insists on using only a single thread. The cause here was the ‘Contrast Filtering’ attribute – a new attribute that by default is set to GridSort. When running on the CPU, even with 16GB RAM, and describer quality and density set to ‘low’, the node insists on using only one core. Setting this parameter to ‘static’ or reduced the node processing time to about a minute, and setting it to ‘AdaptiveToMedianVariance’ the node took about 2 minute

DepthMap and DepthMapFilter: Reduce MaxViewAngle

I picked this tip up on Twitter from @glebalexander, reducing the Max View Angle parameter can help speed up depth map generation. I reduced the default value of 70 down to 30 on both nodes. The result was that DepthMap took 13 miinutes 18 seconds (down from 20 minutes 7 with default value) and DepthMapFilter took 4:58 (down from 7:13), so a speed increase of about 40%. Meshing and MeshFiltering were a touch quicker too. The final model with these settings still looks great, and has 1.3 million faces:

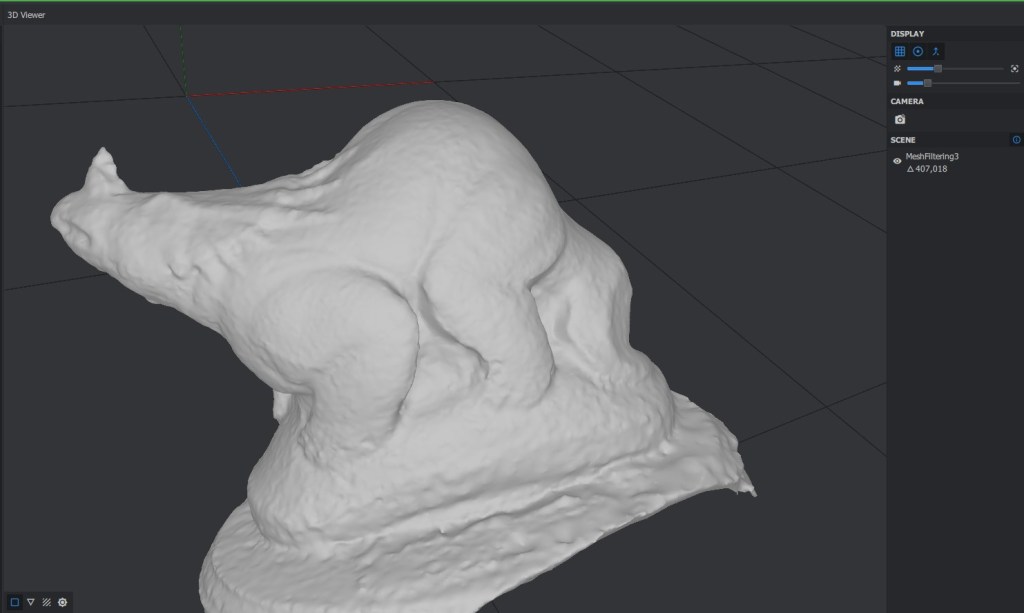

DepthMap: Downscale the DepthMaps

This one is fairly obvious – downscaling the depth maps reduces the resolution of them, and therefore makes processing speed much quicker. When I changed the downscale parameter from the default of 2 to 4, DepthMap took 12 minutes, DepthMapFiltering too 1 minute 56, and then meshing took 1:37, and mesh filtering just 2 seconds. However, in this case the settings did obviously negatively affect the final mesh, which consisted of just 407,000 faces:

By using the above tweaks (GPU Feature extraction, DepthMap/Filtering Max View Angle at 30), I was able to get a fully textured mesh, of 1.35 million faces in just 35 minutes:

That’s not bad, and can probably be improved further by digging a bit deeper into the parameters. It’s significantly better than the 65 minutes stated in my all software comparison post, and it’s better than the default settings for both 2019.2 and 2021.1. However, it’s a far cry from the 5 minutes for Agisoft Metashape, and the 2 1/2 minutes for Reality Capture.

I love using free software, and try to do so where I can, but I have to admit that since the texturing node stopped making single textures for high-res meshes, back with Meshroom 2019.2, I’ve found myself using Agisoft Metashape for a lot more of my work. At $59 for the educational version, it’s much cheaper than Reality capture, and really quick. BUT… $59 is a lot if you don’t have it, and Meshroom does do the job, with a bunch of advanced features that might be useful to you. It’s still, I think, the best all-in-one free software solution (providing you have a CUDA-capable Nvidia GPU), but the gap between free and commercial software is definately widening now, and maybe that shouldn’t be too surprising.

Hey, thank you for your post.

what are the changes need to be done in the auto script you published for running meshroom from cmd\powershell?

I’ve updated this here: https://peterfalkingham.com/2021/08/19/updated-batch-script-for-meshroom-2021/

What parameters did you use for the other two programs? I’m asking because a million and a tenth of a million isn’t an obvious difference, so it could have affected the results. I haven’t used any of the three yet but I’m just cuious.

The other two programs being Meshroom versions 2018 and 2019? It would probably have been default, but they were 5/4 years ago. Million and tenth of a million of what? I started out with default settings, then explained lowering a couple of settings that I either didn’t previously lower, or weren’t available in earlier versions.