I’ve been testing out instant-ngp recently, it’s an open-source software from Nvidia that generates neural radiance fields. That’s a 3D representation of an object that is fundamentally different from the points or verticies and faces we may be used to. Rather than representing an object through 3D coordinates, connections between them, and colours (i.e. vertices and faces, plus textures), the digital representation is more about what light is doing at a given place in space. The result is something that looks incredibly realistic, and lacks the ‘edge-ness’ of traditional meshes.

Instant-ngp is Nvidia’s software, available on github here: GitHub – NVlabs/instant-ngp: Instant neural graphics primitives: lightning fast NeRF and more. As you can imagine, an Nvidia CUDA-capable GPU is a necessity.

I saw lots of chatter about NERF replacing photogrammetry, so naturally I was interested. I’ll detail below the process for installing and running instant-ngp, and it really is fantastic, but the take-away from my experience is that while it produces amazing 3D representations there’s not a lot to do with them at the moment – you can’t easily use the NERF reconstructions in anything like you can a mesh or point cloud. That may well change, or at the least I know there’s a lot of ongoing research into meshing NERF representations optimally. Also, rather than replacing photogrammetry, NERF (or at least instant-ngp) still requires a traditional photogrammetry reconstruction to ascertain camera positions before producing the NERF, so it’s more of a branch or extension that might one day ‘replace’ the dense reconstruction/meshing of photogrammetry.

Installing instant-ngp

This was a bit of a pain to be honest. I tried to install and run through Windows Subsystem for Linux, got to the point where it would build, but then couldn’t get the GUI to play nice. So I ended up doing everything through windows (and breaking some dependencies for other stuff I had running!)

First up, I had to install the CUDA toolkit: CUDA Toolkit 11.6 Downloads | NVIDIA Developer, which I a 3GB download.

I then had to make sure my Nvidia drivers were up to date in Windows.

I then installed the optional Optix package.

Then I installed Visual Studio community edition.

Oh, I think I needed CMake too, but I can’t remember now. It’s installed on my computer now though….

On top of that, all my environment variables didn’t keep up, so I had to run it all through the Visual Studio Developer command prompt.

…Ugh.

After all that, I ran cmake, then I opened the visual studio package, and hit compile…

…I think (it’s been a couple of months and I can’t remember the details, but I’m loath to reinstall all these dependencies and break it)

Running instant-ngp

So the first thing you need to do is run everything through COLMAP to calculate camera positions. There are python scripts to do this. Make sure you’ve installed COLMAP, and added the COLMAP binary to the path environment variable. I then set up my data as follows:

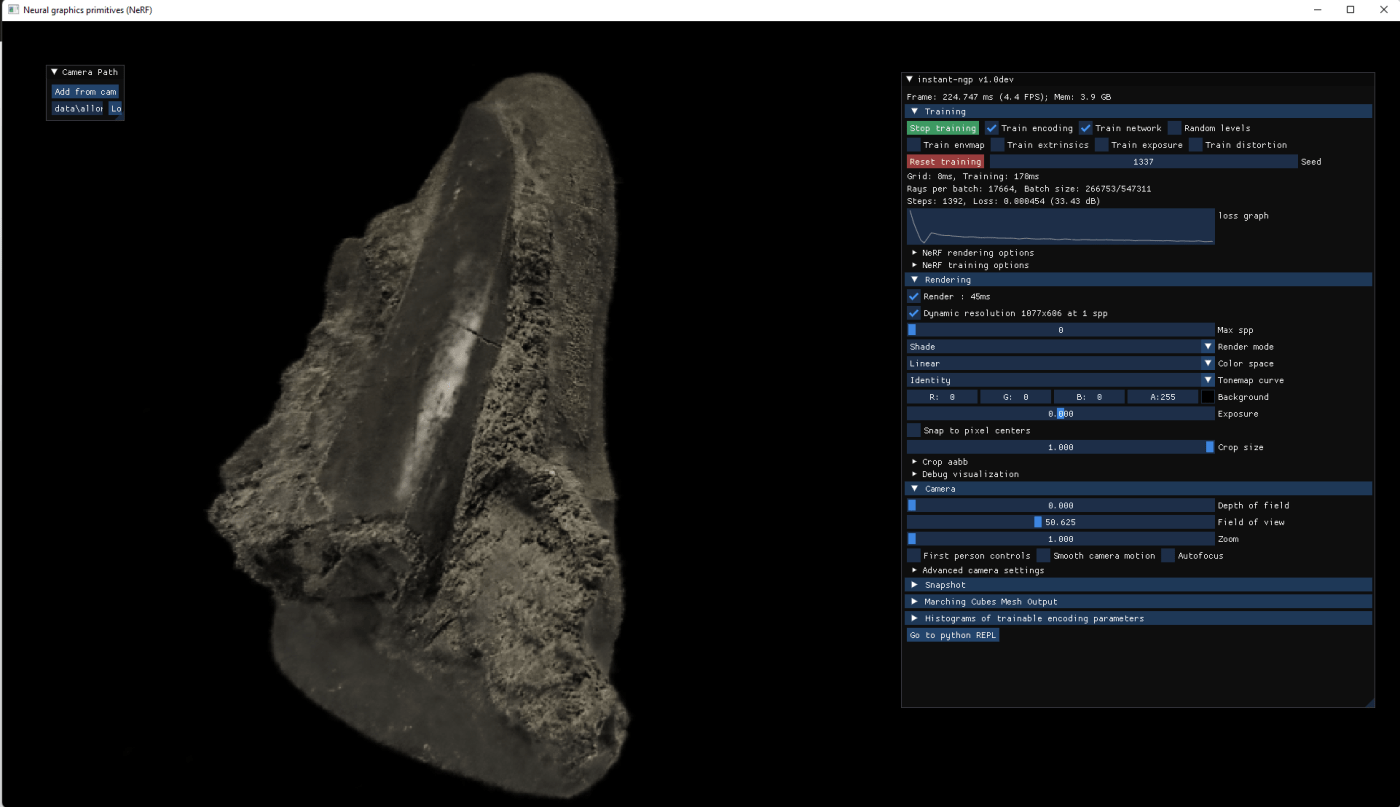

/Instant-ngp/data/<project>/imagesSo, for a dataset of an allosaurus tooth, it looked like this:

/instant-ngp/data/allosaurus/imagesAnd in the images folder, were the 50 or so images I used to test it.

I then navigate to within the folder ‘allosaurus’ and run:

python ..\..\scripts\colmap2nerf.py --colmap_matcher exhaustive --run_colmap --aabb_scale 1This calls a python script (colmap2nerf.py) that will run COLMAP using the exhaustive matcher on the jpgs in the images folder.

To then run instant-ngp, navigate up a couple of directories and run

\out\build\x64-Debug\testbed.exe –scene\data\allosaurus\That will open instant-ngp and everything will look fairly blank…

…until it runs. And then the magic happens (I’ll show videos through tweets, as it’s easier than trying to embed them here):

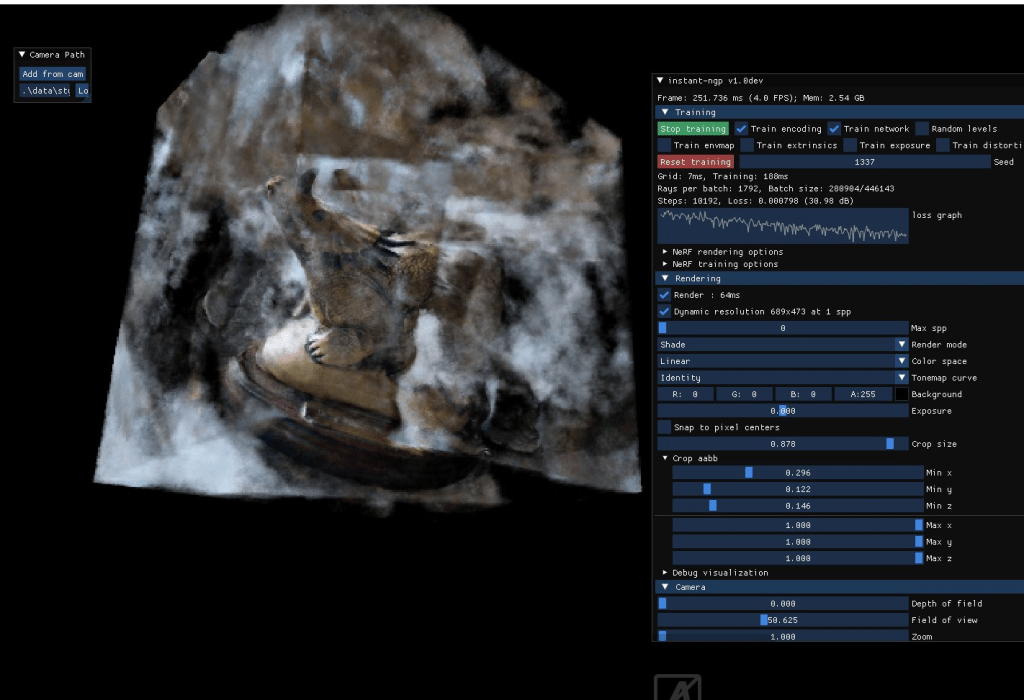

You can see from the video I posted to twitter and the image above that the NERF reconstruction is really, really, impressive, and doubly so that it only takes seconds from a camera position reconstruction. Even the reflectiveness of the shiny plastic is impressive.

However, as that video also shows, the meshing is not great, but we’ll come back to that after I talk about some other issues. The first being that your photo set needs to be really good. I first tried with my standard styracosaurus dataset that I use for all my photogrammetry testing, and it failed hard:

I then ran that dataset through REMBG, and adjusted ‘scale’ which determines how far outside the area of interest the program reconstructs. Both of which made things a lot better:

Very cool and exciting, but I’m not convinced the fine detail is there. Will check another dataset. pic.twitter.com/7IxvTfGiss

— Peter Falkingham (@peterfalkingham) February 22, 2022

Again, we’re seeing that simple marchine cubes algorithm in the software is borderline useless for any kind of organic shape (incidentally, it works significantly better on the lego set they have as an example with the code, presumably because that’s all flat surfaces). Even with the complimentary taxidermied fox dataset, the nerf is amazing, but the mesh not so much.

What that means is that this is fundamentally awesome technology, but at the moment there’s no way to get anything meaningful out of the software for scientific purposes. I’m absolutely sure there will be, either through the ongoing research into meshing, or perhaps we might see a move away from polygons towards radiance fields (I doubt it, voxels were awesome and they never really took off because GPUs have been designed around pushing polygons).

So yeah, currently very much experimental, but if this is a sign of things to come, I am very very excited.

Very interesting, thank you. I’d read a few introductory articles about NERF but the difficulty of getting a mesh of the object was always glossed over. And as you say it’s of limited use without it.

Yeah. I know it’s an active area of research, and it may advance rapidly (at the moment in instant-ngp, it’s just a really simple machine cubes algorithm as a proof-of-concept more than anything). But if I can’t export and measure, model, print and so forth, then this is just for visualization for now.

Hi there, you could find something interesting on this implementation of neural 3d reconstruction:

https://github.com/ventusff/neurecon

There are links for original papers of the following projects:

UNISURF, VolSDF and NeuS

Is it just instant-ngp that has issues with exporting meshes, or is that a general issue with the NERF approach that nobody has cracked yet?

As far as I can tell, meshing any NERF is difficult, and an active are of research

This comment has aged like milk – LumiaAI are doing great things with NERF, including some half-decent meshing. There’s also this: https://twitter.com/peterfalkingham/status/1527997586275287041

Would it be possible for you to share the instant-ngp exe, so we don´t need to go through the hassle building it? 😉

Unfortunately there’s gigs of dependencies, so sharing the exe wouldn’t work… I don’t think even sharing the whole build directory would work unless your computer is set up _exactly_ like mine.