I’ve previously detailed my process for removing backgrounds from non-ideal photogrammetry data sets, using Photoshop and it’s pretty neat object selection tool. The result of removing backgrounds from images is that you can use the ‘void’ method, without needing a full photoshooting studio. For instance, I have a lightbox, but it’s only good for small objects, so something larger is more difficult to capture photos for, without incorporating the background.

Unfortunately, while the object selection tool in Photoshop is very good, actually using it on large datasets is a massive pain in the arse, because it’s hard to automate with scripts, and photoshop laboriously opens each image one at a time, and then you have to manually select, invert selection, delete, and save.

REMBG, by Daniel Gatis, was brought to my attention on Twitter by El Datavizzardo, and then later on the photogrammetry subreddit.

It’s available on GitHub here: GitHub – danielgatis/rembg: Rembg is a tool to remove images background.

It’s a python tool, and it all runs from the command line. I followed the installation instructions on the github page, but could not for the life of me get it running in Windows Powershell. However, I did get it going in Windows Subsystem for Linux. The downside here is that it looks like REMBG can be GPU accelerated, but currently WSL doesn’t support CUDA and GPU compute in production builds. I do happen to have a laptop running Windows 11 developer build, and I didn’t notice any speed up or GPU usage on that, so maybe I need to have a play with how I’m installing and running REMBG.

Anyway… It’s dead easy to run. Once it’s installed using pip, you just have to type into your terminal:

rembg -p <input_directory> <output_directory>

It’ll then take all the images in the input directory, and remove the background from them, placing the new images in the output directory.

You can probably tell from the title of this post that the results were good, but how good exactly?

Very.

I took 300 images of an Ostrich tarsometatarsus on a rocky background, moving the bone between sets of about 50 photos. This is what a sample look like:

And this is the output for those exact same images:

It took about 10 seconds per image (26mp images), but of course it’s all automated and chuggs away in the background, so that’s fine with me. I also found slightly better results using:

rembg -a -ae 15 -p <input_directory> <output_directory>

As recommended on the github page.

The outputted files are PNGs, which they need to be because the background is transparent, not just a solid colour.

I’m genuinely shocked how well it identified the object of interest in nearly all these photos, given it’s unlikely to have been trained on an image like this. There were some minor errors – a couple of images had a little trouble, either too much was removed or not enough:

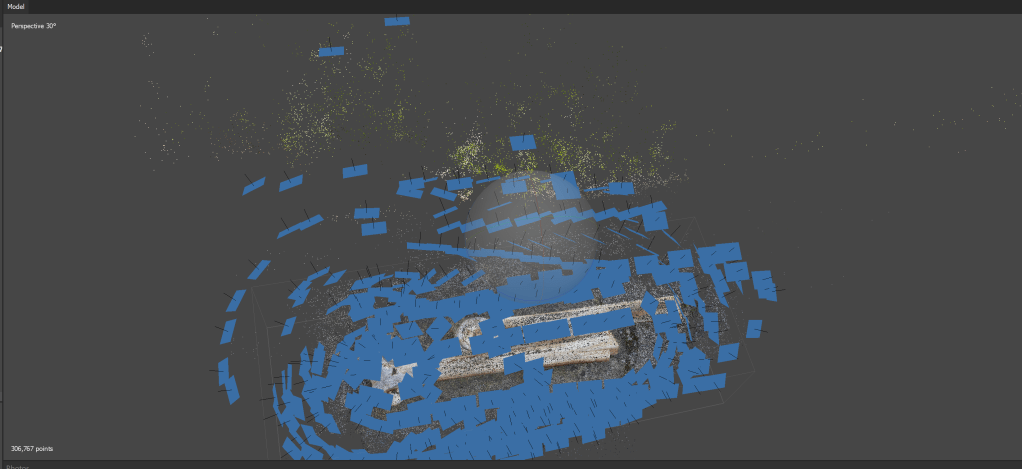

So what difference did it make to photogrammetry? Well, if I run the original photos – where there’s a nice contrast and patterned stone background, and the object isn’t constent – through Metashape, on high, then…

If we hide the cameras, you can see that it’s just each orientation overlain, a common issue with datasets where the object moves but the background doesn’t:

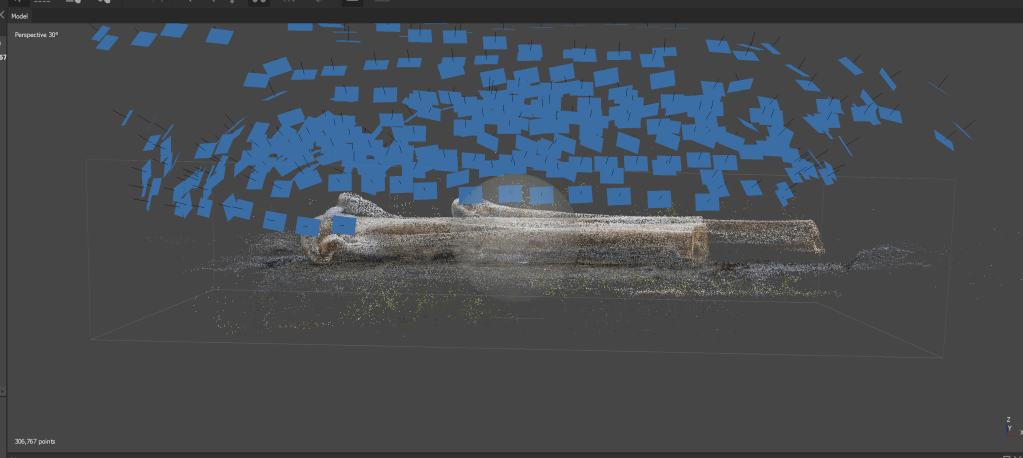

However, running the new background-removed set with the same settings, almost every single camera matched, resulting in an amazing reconstruction. The sparse cloud had some errant points here and there:

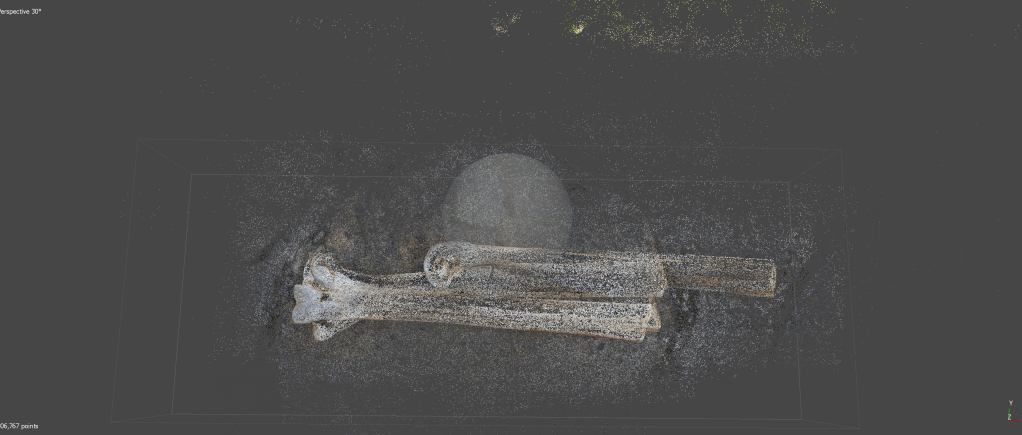

But the final mesh was nigh on flawless:

There’s a few dark polygons seperate from the main bone mesh, but otherwise, no background in sight, and Metashape assumed the object was floating in space.

I can’t recommend REMBG enough, it’s awesome, and is going to be amazingly useful for digitizing specimens in the future. Daniel Gatis has a buy-me-a-coffee link on his Github or here: danielgatis (buymeacoffee.com), and if you find Daniel’s software useful, buy that man a drink!

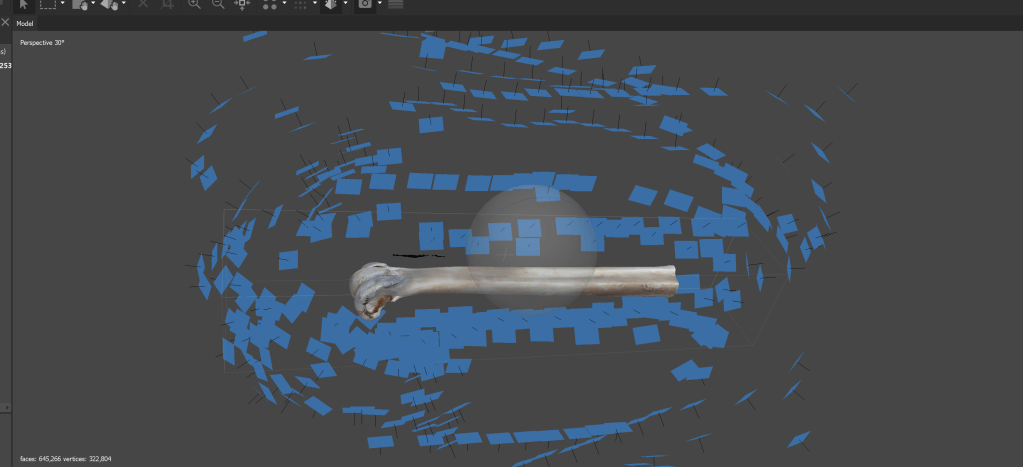

Here’s the finished bone, with all the others from the foot:

Tried REMBG on xubuntu 20.04 to pre-process images (of Megalithic Stones) prior to feeding to Meshroom and Agisoft Metashape. Certainly cuts out a lot of clutter around final model and produces a model with better defined edges – a definite must have inclusion for photogrammetric workflow.

Do you have an option for sending files with comments ? – I can send a screen shot of original image + rembg image + Meshroom model + Agisoft model

Great, glad that worked! You can either leave a comment or contact me direct, and just upload a screenshot to dropbox/onedrive and share the link

Hi, Peter!

Results on such a hard case are very inspiring – rembg worked like a charm!

Note that it can be integrated with Metashape via Python script – see automatic_masking.py in metashape-scripts repository on github

Cool – that’s good to know, thanks.

Thank you for sharing this useful information. However, for me, removing the background is not a simple thing. That’s why I usually use online background remover tools. They are really cool and extremely helpful. I believe you should research and use them.

Thanks. I’ve given Photoshop a go, and it’s background removal is excellent for more complex objects: https://peterfalkingham.com/2020/01/13/the-new-select-object-tool-in-photoshop-2020-is-a-lifesaver-for-photogrammetry/

The problem is, when you’re doing photogrammetry with 100’s or 1000’s of images, you need a way to do it in batch, you can’t do individual images. What kind of online background removal tools do you use?

Thank you so much for this. Worked amazingly to remove background. Unfortunately, the images lost camera properties in the process and that is causing issues in Meshroom. Did you encounter such problem? If so, how did you resolve it. Any advice for how to deal with it?

I didn’t suffer from the loss of metadata, no – everything still aligned just fine. But I have used EXIF tool in the past (https://exiftool.org/) to transfer metadata between images.